After a recent upgrade on one of our vCenter Server appliances to 6.5 U1 we began to experience issues dealing with 503 errors. Now for a TL;DR version, we were getting duplicate keys within our Postgres database, mainly within the VPX_VM_VIRTUAL_DEVICE table. After a google frenzy, I quickly saw that this issue is indeed common, however common for those running 6.5 GA – and was supposed to have been addressed in update 1. In our case though, the issue didn’t present itself at all in GA and only appeared after the upgrade to 6.5 U1. Anyways, a quick little recap on how to troubleshoot, identify and fix this issue…

After a recent upgrade on one of our vCenter Server appliances to 6.5 U1 we began to experience issues dealing with 503 errors. Now for a TL;DR version, we were getting duplicate keys within our Postgres database, mainly within the VPX_VM_VIRTUAL_DEVICE table. After a google frenzy, I quickly saw that this issue is indeed common, however common for those running 6.5 GA – and was supposed to have been addressed in update 1. In our case though, the issue didn’t present itself at all in GA and only appeared after the upgrade to 6.5 U1. Anyways, a quick little recap on how to troubleshoot, identify and fix this issue…

Before we get right into it though, a note – don’t do this unless you are comfortable doing so – VMware has a support team for a reason so if this is your production environment then I would most certainly direct you to open a support request with VMware to resolve this issue! That said, if it’s your lab or you don’t have any support – make yourself a backup of your VCSA and follow at your own risk…

First up was identifying the actual issue – for me that started with a simple poll of what services were started and stopped on the VCSA. Running “/bin/service-control –status” will give us a nice list of all of the VCSA services and whether they are running or not…

As we can see above my vmware-vpxd service, the main vCenter service is not running – that’s where my 503 errors were stemming from…. My first efforts to simply start the service were unsuccessful, running a “/bin/service-control –start vmware-vpxd” simply timed out and never did anything – so it’s off to the logs to have a look to see if we can find anything useful. The main vpxd service log is located in /var/log/vmware/vpxd/vpxd.log

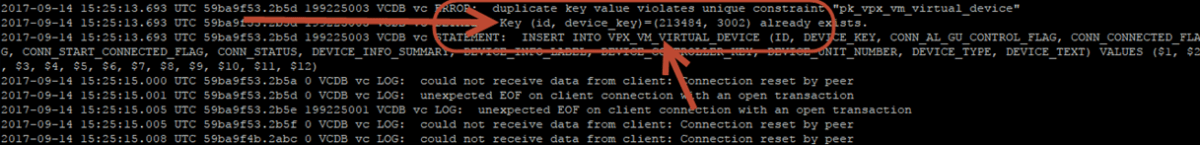

As highlighted above we can see that we are indeed seeing a duplicate key error. It’s this primary key constraint which is halting all operations when trying to start our vpxd service. Now although the vpxd.log does show us there is some sort of duplicate key trying to be inserted into our table it doesn’t actually let us know any information in regards to the values it’s trying to insert. In order to get those, we need to head on over to our Postgres logs (/var/log/vmware/vpostgres/postgres-DD.log – Where DD represents the day of the month).

It’s here we can actually see the data that is trying to be inserted – in our case, a record with an id of 213484 and a device_key of 3002 already exists – yet vmware-vpxd is trying to insert this exact same information upon starting. If you are interested we can map these id’s back to actual VM names by their id value within PowerCLI – a simple “Get-VM | where-object { $_.id –like ‘*213484’}” will return the name of the VM causing the issue if you care to know – of course, without vmware-vpxd actually running this command will fail to execute so it’s kind of a catch22.

So how do we alleviate this issue so vpxd can actually insert the data? Well, we can start by deleting the existing row in the Postgres database. To do so, we must first log into our Postgres client on the VCSA to execute commands. The following command will do just that…

/opt/vmware/vpostgres/current/bin/psql -d VCDB -U postgres

Once in the Postgres environment, we can execute the following command to delete the row…obviously substituting the values with the ones shown in your own postgres.log file.

DELETE FROM vc.vpx_vm_virtual_device where id=’213484′ and device_key=’3002′;

After confirmation of removal, we can now try and start our vpxd service again. A “\q” will get you out of the Postgres environment, and we can attempt to startup our vmware-vpxd service with “/bin/service-control –start vmware-vpxd”. If all goes well we should now have a functioning vCenter appliance. That said, I had to do this numerous times as I kept experiencing the duplicate values over and over – so if it doesn’t start put this article in a loop and perform the steps over and over until it does. Not an ideal solution, and feel free to approach VMware support for a better one, however that is exactly what I’ve done and they said to just monitor it and delete the duplicates…

So with that, I hope this helps someone out looking for a quick fix for this duplicate key issue. I’d be interested to hear if you have experienced this with 6.5 Update 1 as well as it’s supposed to be addressed in the patches! Let me know in the comments if you wish… Thanks for reading!

4.5